|

Haoxiang Ma (马浩翔) I am a Young Researcher at Shanghai Artificial Intelligence Laboratory, with research interests in Vision-Language-Action (VLA) and Robotic Manipulation. I received my Ph.D. from Beihang University in Nov. 2025, advised by Di Huang. 我目前是上海人工智能实验室青年研究员,主要研究方向和兴趣是VLA模型与机器人操作。 我于 2025 年 11 月博士毕业于北京航空航天大学,导师为 黄迪。 |

|

ResearchI'm interested in robotic learning, computer vision and embodied AI. Most of my research is about inferring the grasp poses from images and point-clouds. |

|

InternVLA-A1: Unifying Understanding, Generation and Action for Robotic Manipulation

, , , Haoxiang Ma, arXiv, 2026 project / paper / code / video / data A unified VLA framework that combines understanding, visual foresight, and action generation for robust robotic manipulation in dynamic and static scenarios. |

|

GraspLDP: Towards Generalizable Grasping Policy via Latent Diffusion

, Haoxiang Ma*, , , CVPR, 2026 project / paper / arXiv / video / code (coming soon) GraspLDP injects grasp priors into latent diffusion policy learning to improve grasp precision and generalization in both simulation and real-world manipulation. |

|

Active Perception for Grasp Detection via Neural Graspness Field

Haoxiang Ma, , , NeurIPS, 2024 code / paper An active perception method for grasp detection by introducing the neural graspness field, which models the grasp distribution of a scene. |

|

Generalizing 6-DoF Grasp Detection via Domain Prior Knowledge

Haoxiang Ma, , , CVPR, 2024 code / paper / video Generalizing 6-DoF grasp detection framework with domain prior knowledge of robotic grasping. |

|

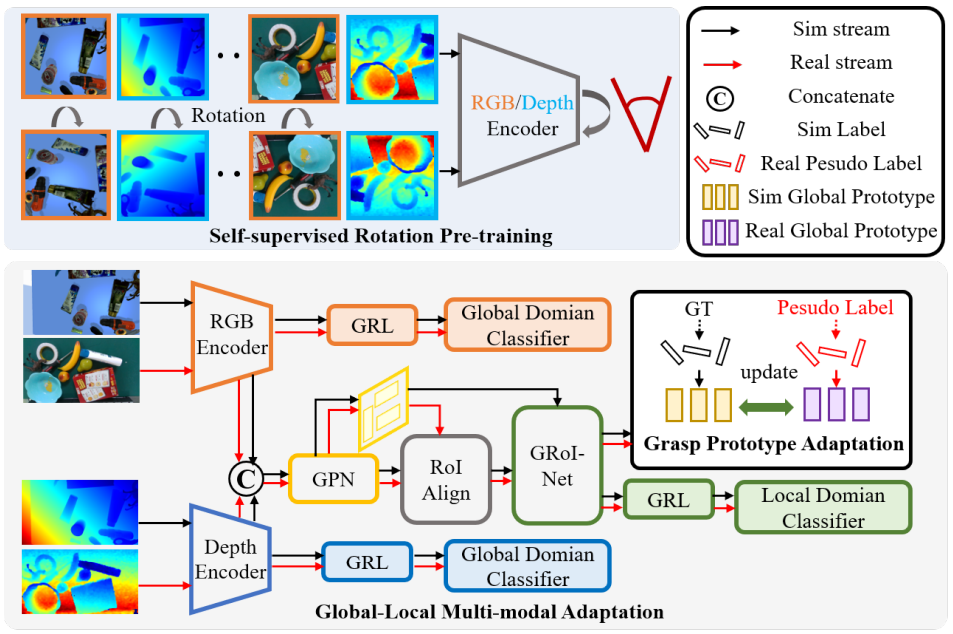

Sim-to-Real Grasp Detection with Global-to-Local RGB-D Adaptation

Haoxiang Ma*, , , , ICRA, 2024 code / paper We present a global-to-local method to address hybrid domain gaps in RGB and depth data and insufficient multi-modal feature alignment. |

|

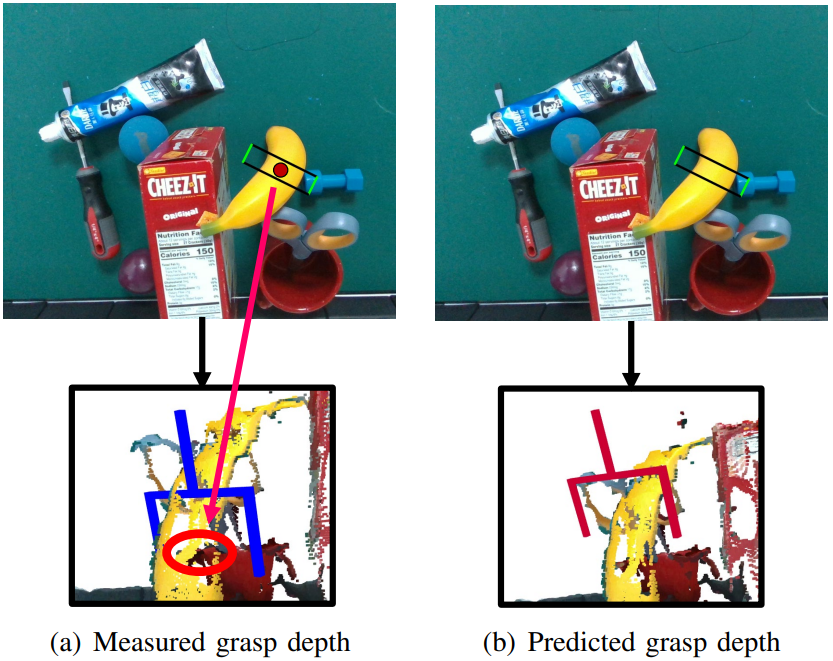

RGB-D Grasp Detection via Depth Guided Learning with Cross-modal Attention

, Haoxiang Ma, , ICRA, 2023 code / paper We build a depth guided learning framework, where both the RGB and depth images are fed and their features are combined to generate grasp proposals. |

|

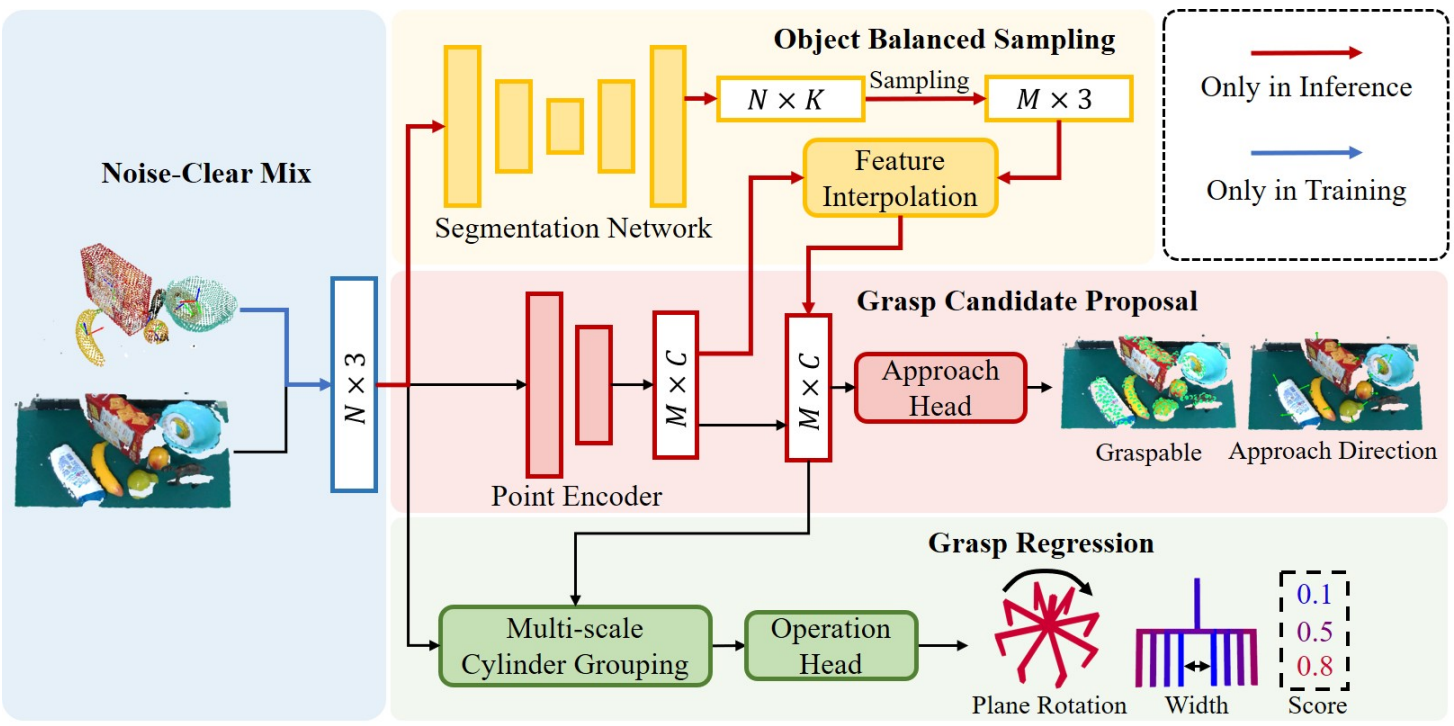

Towards scale balanced 6-dof grasp detection in cluttered scenes

Haoxiang Ma, CoRL, 2022 code / paper / video Focus on the problem of feature learning in the presence of scale imbalance for 6-DoF grasp detection and propose a novel approach to especially address the difficulty in dealing with small-scale samples. |

|

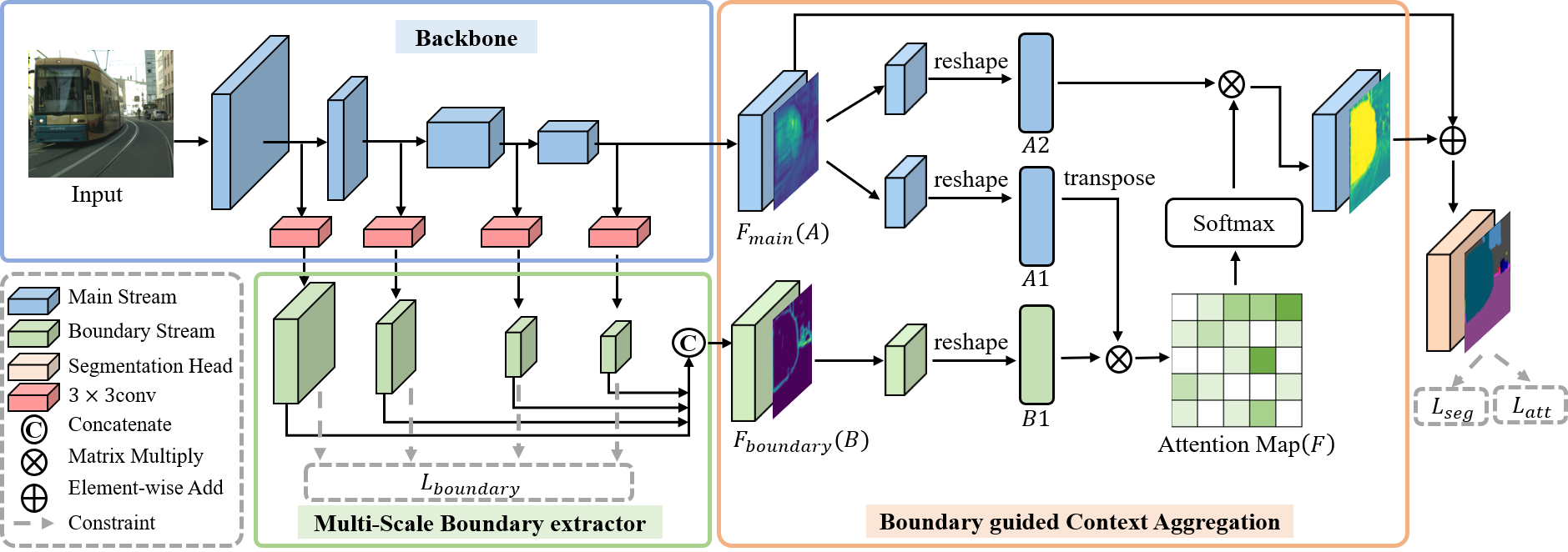

Boundary Guided Context Aggregation for Semantic Segmentation

Haoxiang Ma, , BMVC, 2021 code / paper We exploit boundary as a significant guidance for context aggregation to promote the overall semantic understanding of an image. |

Miscellanea |

Academic Service |

Reviewer of CVPR, CoRL, ICLR, NeurIPS, ICML, RA-L and etc. |

|

|